Automate document processing into Databricks

Automate exporting data from your documents into Databricks by integrating the Affinda Platform with your Databricks account. Achieve straight-through processing by eliminating manual data entry for good.

Get data from your documents into Databricks

Invoices, receipts and contracts

Extract structured data from invoices, receipts and contracts directly into your Databricks Lakehouse - enabling analytics, reporting and data science at scale.

Compliance forms and audit reports

Extract compliance data from forms and audit reports directly into your Databricks Lakehouse - ensuring audit readiness, supporting regulatory reporting and enabling compliance analytics at scale.

Onboarding forms and resumes

Extract employee data from onboarding forms and resumes directly into your Databricks Lakehouse - powering workforce analytics, improving HR planning and enabling talent insights at scale.

Purchase orders and invoices

Extract procurement data from purchase orders and invoices directly into your Databricks Lakehouse - enabling spend analysis, identifying cost savings opportunities and improving supplier management at scale.

How to automate document processing into Databricks

Affinda processes your documents in the background and sends data straight into Databricks.Automatically send your documents to Affinda

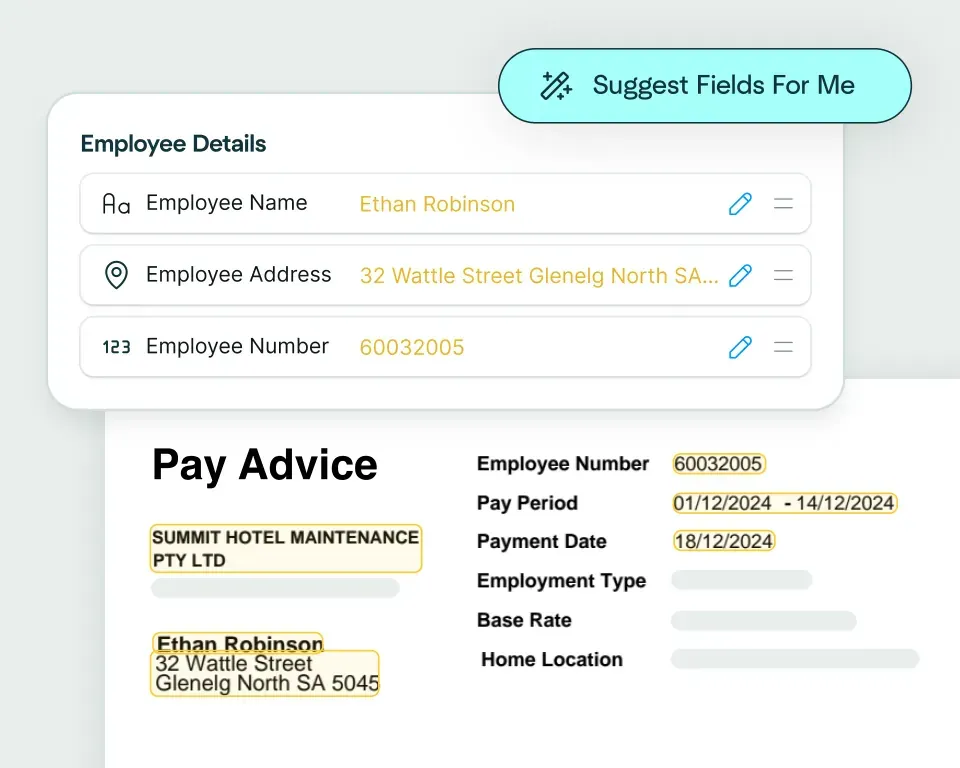

Upload, email or integrate your documents as soon as you receive themAI agents extract and validate key data fields

Affinda's AI agents extract and transform your data with superior accuracy, thanks to advanced contextual understanding and machine validation.See your data appear in Databricks

Affinda sends your data straight into Databricks, automatically populating all the extracted data fields.Extract any information from any document, fast

Create models in seconds

Upload a claims document and the Affinda Platform will predict the fields you need – like claimant details, policy number, incident date, totals and line items – so you can automate claims document processing in just a few clicks.

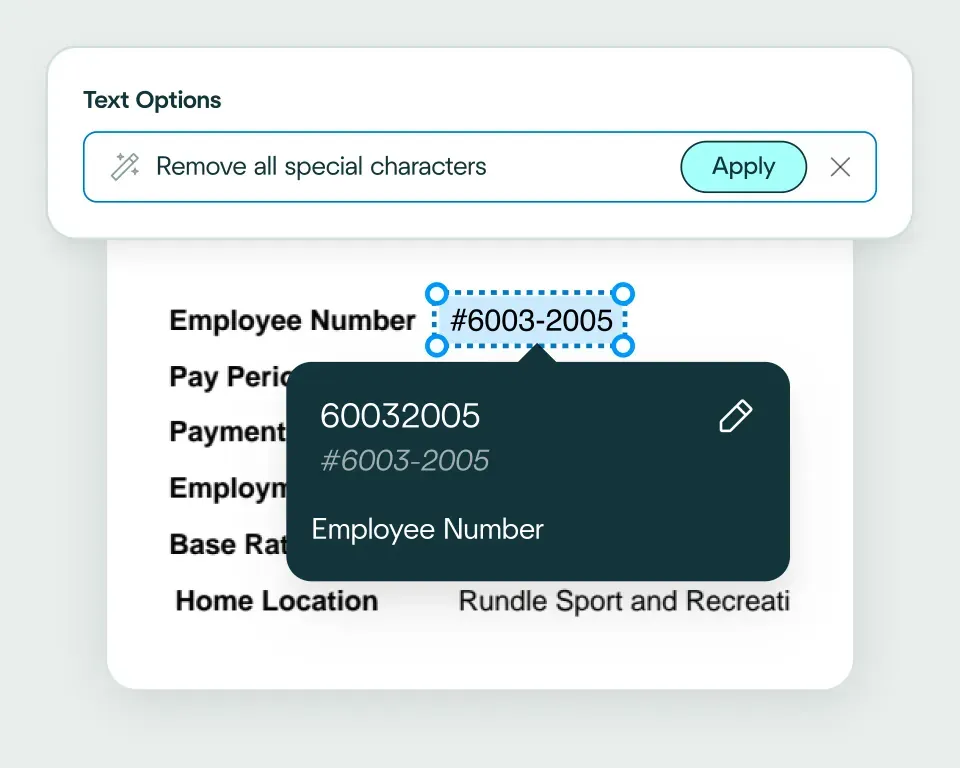

Validate and transform data

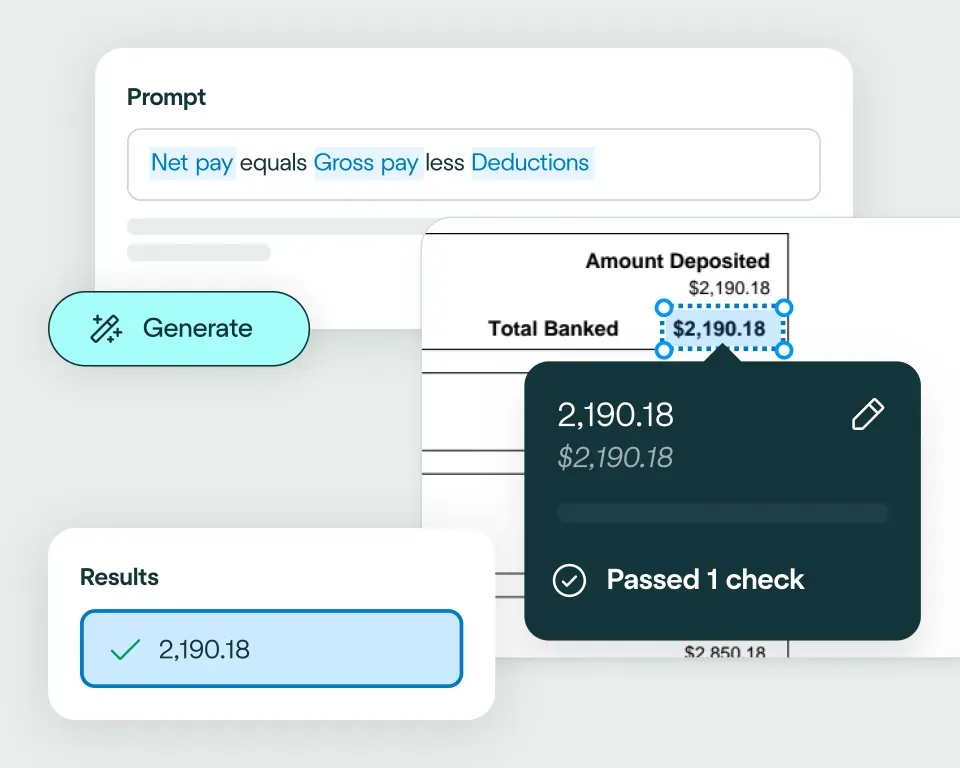

The platform checks extracted claims data against your business rules and transforms it into a format your claims management system expects. That way, it’s ready for workflows like coverage checks, reserving, routing and settlement.

Apply your business logic

Use natural language to write validation rules that match your claims workflows, for example: flag missing fields; check policy numbers match correct formats; validate that document dates are within ranges; check financial consistency, such as line items summing correctly to totals.Pathway 1: Use the Agent

Create integrations fast, even if you’re not a developer. Choose from 2800+ business systems and describe how you want your claims document processing workflow to connect, using natural language. The Agent will generate the code to make it happen.Pathway 2: Write your own code

Easily connect Affinda Platform to your claims stack using our client libraries and APIs. Automatically generate type-safe Pydantic models or TypeScript interfaces tailored to your claims documents, so extracted fields map cleanly into your workflows.No need to talk to sales. Get started now

Sign up for free

Sign up and configure your custom extraction model.Set up your integration

The Agent works like your own developer - describe how you want data exported, and it builds the integration for you.Start processing

Send your files to Affinda and watch as the data automatically populates into your downstream system.Automating their document processes with AI

Combine the best of artificial and human intelligence

Frequently asked questions

Does Affinda integrate with Databricks?

Yes. Affinda's integration with Databricks connects your document processing directly to your data analytics workflows. It automatically extracts, validates and syncs data from invoices, contracts, compliance documents, HR forms and procurement records straight into your Databricks Lakehouse.With Affinda, you can populate your data lake faster, eliminate manual entry errors and power analytics at scale by leveraging a single, automated workflow.

How does the Affinda-Databricks integration work?

ffinda processes documents from any source - email, upload or API - and extracts structured data such as supplier names, invoice numbers, contract values, employee details and procurement amounts using AI agents trained on your specific document types.

nce extracted and validated, this data automatically flows into your Databricks Lakehouse in the format you need, ready for analytics, reporting and data science. Whether it's invoices, contracts, purchase orders or resumes, the data arrives structured and analysis-ready.

You can configure validation rules, custom data transformations and business logic using natural language - ensuring every record meets your quality standards before it reaches your data lake.

What types of documents can Affinda process and send to Databricks?

Affinda processes any document type and sends structured data directly to your Databricks Lakehouse, including:

- Invoices, purchase orders and receipts

- Contracts and supplier statements

- Compliance forms and audit reports

- Onboarding forms, resumes and HR documents

It handles both digital and scanned documents across multiple formats and layouts, using advanced OCR and AI-powered extraction to achieve more than 99% accuracy.

Do I need to manually upload files to Databricks?

No. With Affinda, file uploads can be fully automated using our APIs. To get your documents to Affinda, you can choose from three options:

- Drag and drop documents into your Affinda workspace

- Forward documents via email manually or set up automatic email forwarding

- Connect your document source via API or third-party cloud storage

Once received, data is automatically extracted, validated and sent directly to your Databricks Lakehouse - ready for analytics and reporting.

Can I define my own validation and business rules?

Yes. Affinda lets you configure custom validation and business logic before any data reaches your Databricks Lakehouse.

For example, you can set rules to:

- Verify invoice amounts match contract values

- Flag missing compliance dates or incomplete audit records

- Validate supplier details against approved vendor lists

- Check that employee data meets HR data standards

These checks ensure your data arrives in Databricks clean, consistent and ready for analytics and reporting.

Can Affinda handle bulk invoice uploads for Databricks?

Yes. Affinda is built for scale. Whether you're processing 50 documents a month or 50,000 a day, the platform handles bulk uploads and automated batch processing without breaking stride.

This makes it ideal for analytics teams, data science initiatives and enterprise organizations that need to populate their Databricks Lakehouse with high volumes of invoices, purchase orders, compliance documents and HR records - all while maintaining accuracy and speed.

How fast can I get started with the Databricks integration?

You can start processing documents into your Databricks Lakehouse in under an hour. Affinda's flexible integration lets you:

- Configure your document extraction models with a few sample files

- Connect to your Databricks Lakehouse via API

- Map extracted fields to your data schema and start sending data immediately

For teams needing deeper customization, Affinda provides full API access and dedicated support to tailor the integration to your specific data workflows and analytics requirements.

Is my financial data secure when using Affinda with Databricks?

Absolutely. Affinda follows security standards including ISO 27001:2022, SOC 2 and GDPR for security and compliance. Data is encrypted in transit and at rest, with strict role-based access controls and full audit logging. You can also select region-specific data storage to meet your organization's compliance and data residency requirements.

What are the main benefits of integrating Affinda with Databricks?

By integrating Affinda with Databricks, you can:

- Eliminate manual data entry and reduce errors across your data workflows

- Accelerate analytics, reporting and data science initiatives with faster data ingestion

- Power workforce analytics, compliance reporting and procurement spend analysis at scale

- Improve data quality with automated validation before records reach your Lakehouse

- Scale document processing from hundreds to thousands of files without adding headcount

- Gain full visibility into extraction accuracy and data pipeline performance